Livecoding Erraid

On a number of occasions I have used sounds collected at a particular location as a coherent set of resources for a livecoded set. For the last week I've been in retreat on with the community on the isle of Erraid, which has been a welcome break from the city!

One of the features of the island is the 'observatory'. This is a circular tin structure, about two meters across by three high: a restored remnant of the building of the Dubh Artach lighthouse that took place there between 1867 and 1872.

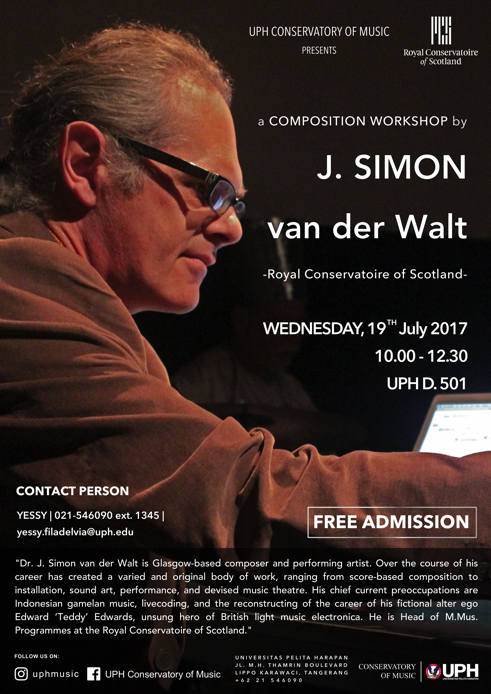

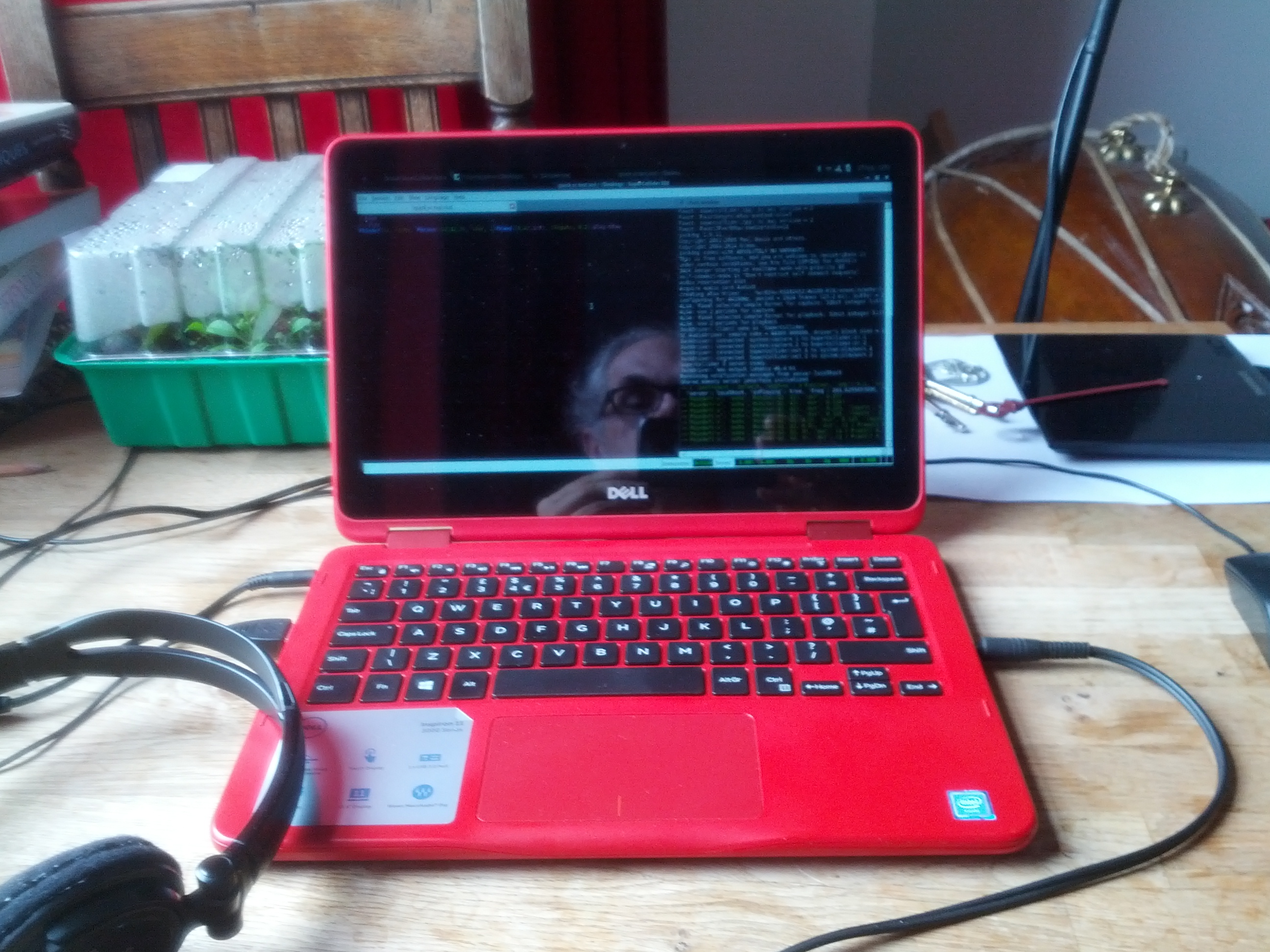

The sound world inside this unusual structure is distinctive. I took some recordings (available on freesound.org, or they will be once the finish uploading), that I am going to be using in a livecoded SuperCollider improvisation this Monday during one of the 'Sonic Nights' series at the Royal Conservatoire of Scotland, where staff and students diffuse new electroacoustic works on a multi-channel sound system. If it seems practical, I may stream the performance as well.